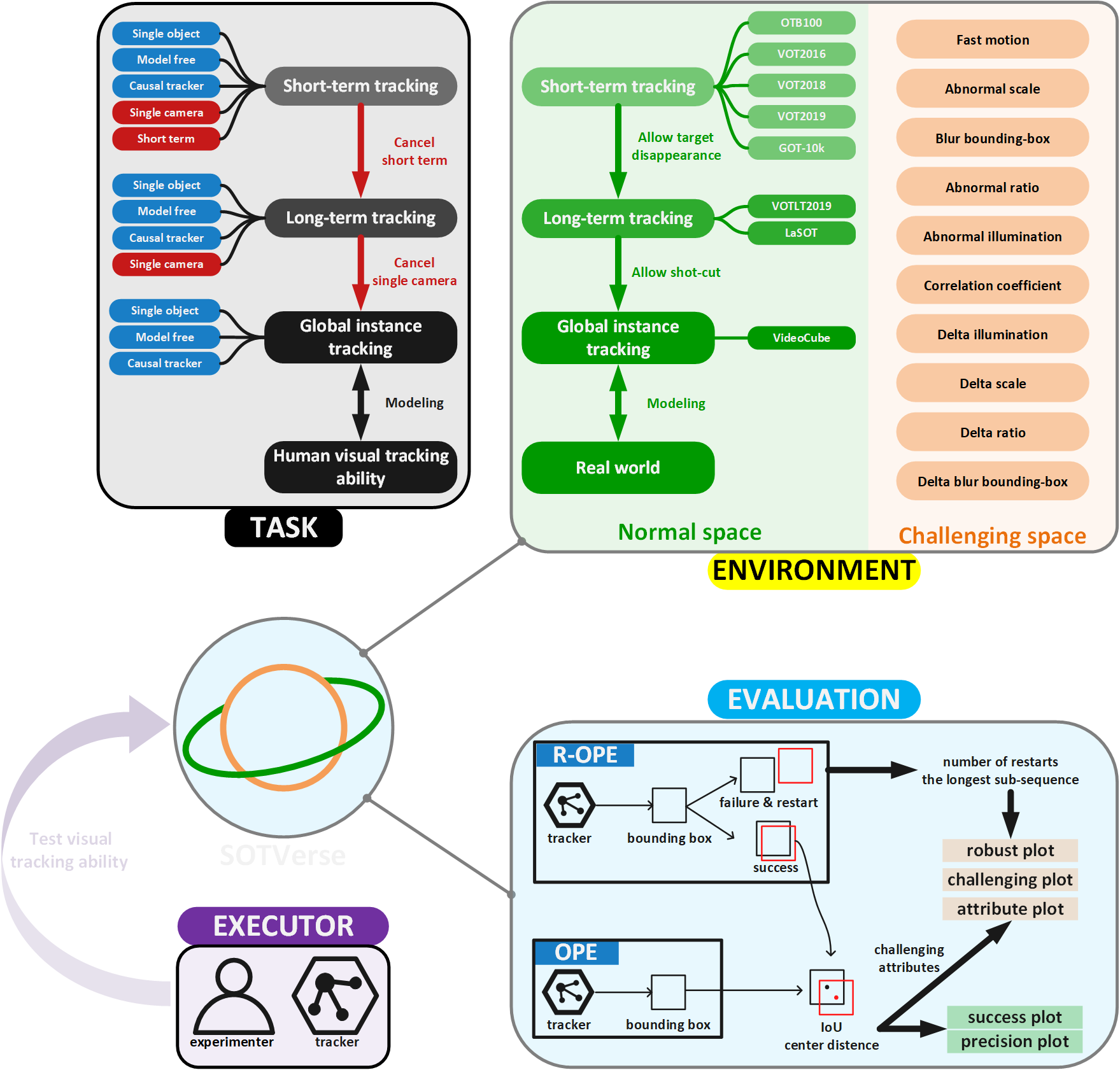

The description paradigm analyzes a task from multiple dimensions, establishes specific operating rules, and provides experimental environments for executors. In other words, the paradigm transforms a monotonous task definition into several operational elements concretely.

In this work, we aim to provide a 3E Paradigm and describe computer vision tasks by three components: environment, evaluation, and executor. Here, we first summarize the related work and then explain the motivation of SOTVerse.

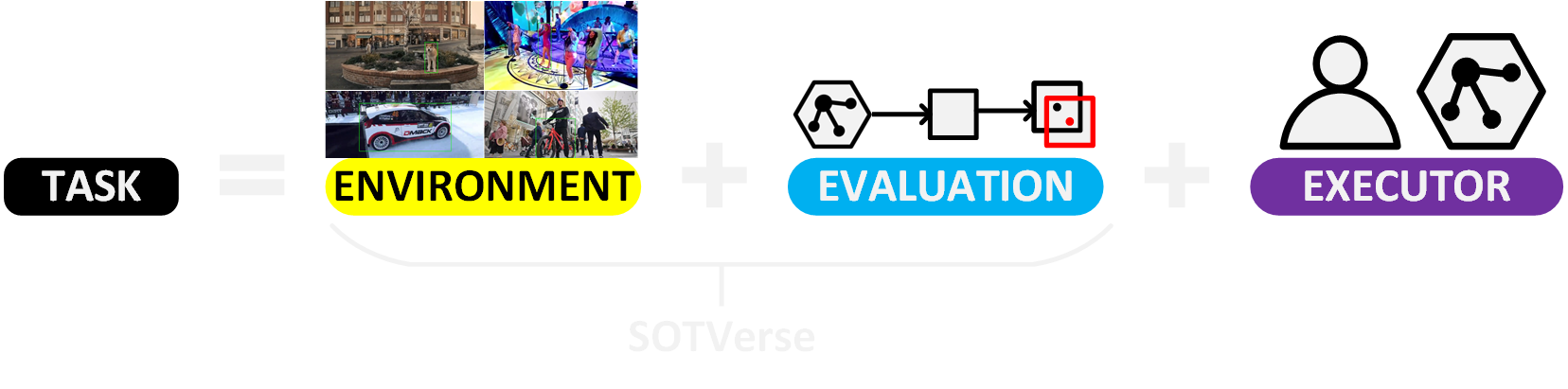

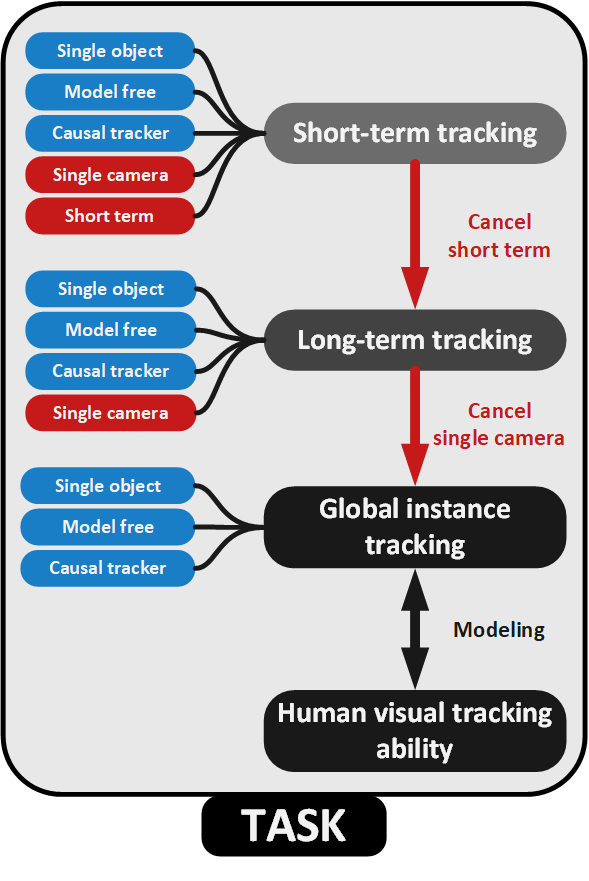

Task

SOT is usually defined as only providing the initial position of an arbitrary object and continuously locating it in a video sequence. Since 2013, researchers have proposed several influential benchmarks -- the organized datasets and unified metrics promote the development of SOT.

However, limited by the research level, early definition adds additional constraints to simplify the task. The influential VOT competition limits the SOT task to five keywords: single-target, model-free, causal trackers, single-camera, and short-term. The first three keywords(single-target, model-free, causal trackers) correspond to the original definition and are the criteria for distinguishing SOT from other visual tasks (e.g., multi-object tracking and visual instance detection). In comparison, the latter two keywords (single-camera, and short-term) are constraints added to simplify research in the early stage.

The development of SOT is continuously removing hidden constraints and closer to the essential definition. Since 2018, some researchers have withdrawn short-term and proposed the long-term tracking task. In 2022, researchers further remove the single-camera constraint and propose the global instance tracking (GIT) task, which is supposed to search an arbitrary user-specified instance in a video without any assumptions about camera or motion consistency. Clearly, GIT realizes the basic definition of SOT by gradually removing the constraints.

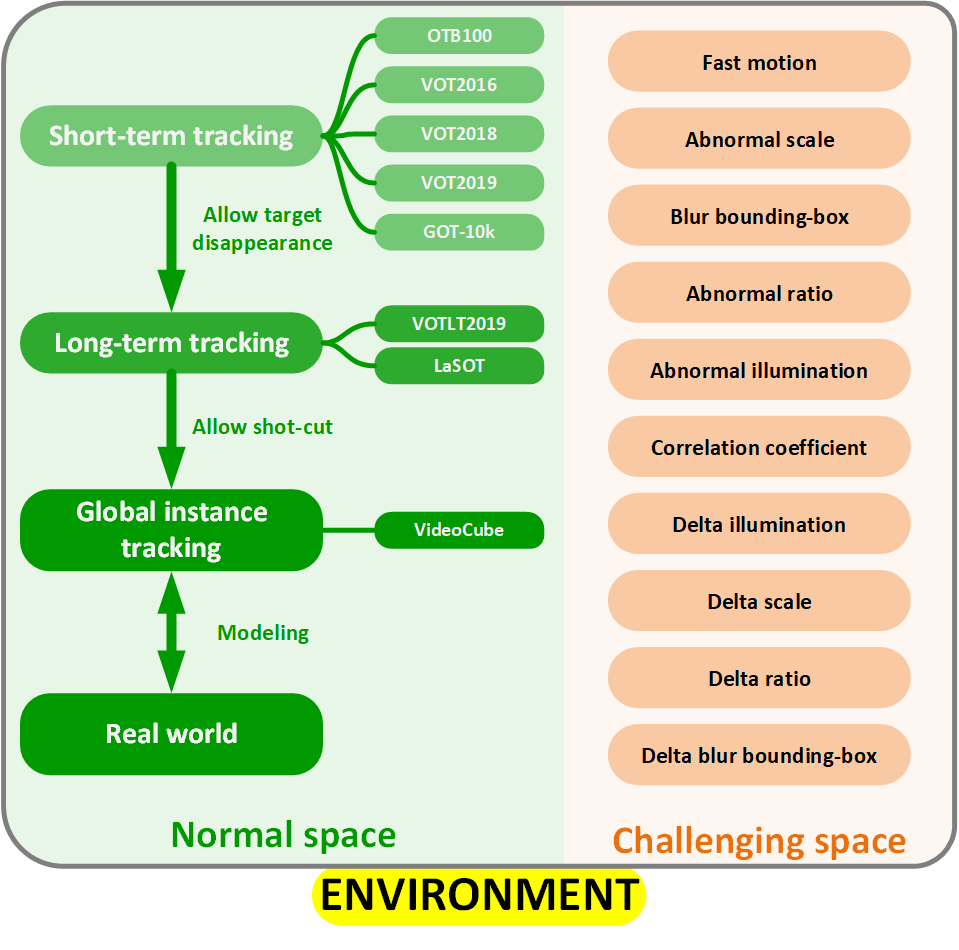

Environment

Related Work

Since 2013, well-organized benchmarks represented by OTB are mainly designed for short-term tracking tasks, which assumes no complete occlusion or target out-of-view happened in this video.

Recently, researchers propose long-term tracking to satisfy the demands of real scenarios. However, it is hard to separate the short-term and long-term in the time dimension. Therefore, the VOT competition proposes a new criterion -- a task that allows the target to disappear completely can be regarded as long-term tracking.

Nonetheless, the implicit continuous motion assumption restricts long-term tracking environments to single-camera and single-scene, which is still far from the natural application scenarios of SOT. Thus, the global instance tracking environment named VideoCube is proposed. It includes videos with shot-cut and scene-switching to model the real world comprehensively.

Motivation of Constructing Environment in SOTVerse

Existing works mainly build experimental environments from different perspectives with various rules, but no one has tried to unify the environments. When researchers try to analyze problems from new perspectives, they have to build corresponding datasets from scratch, significantly reducing research efficiency.This status inspired us to summarize and uniform existing environments to construct SOTVerse, and help researchers generate experimental environments effectively.

Evaluation

Related Work

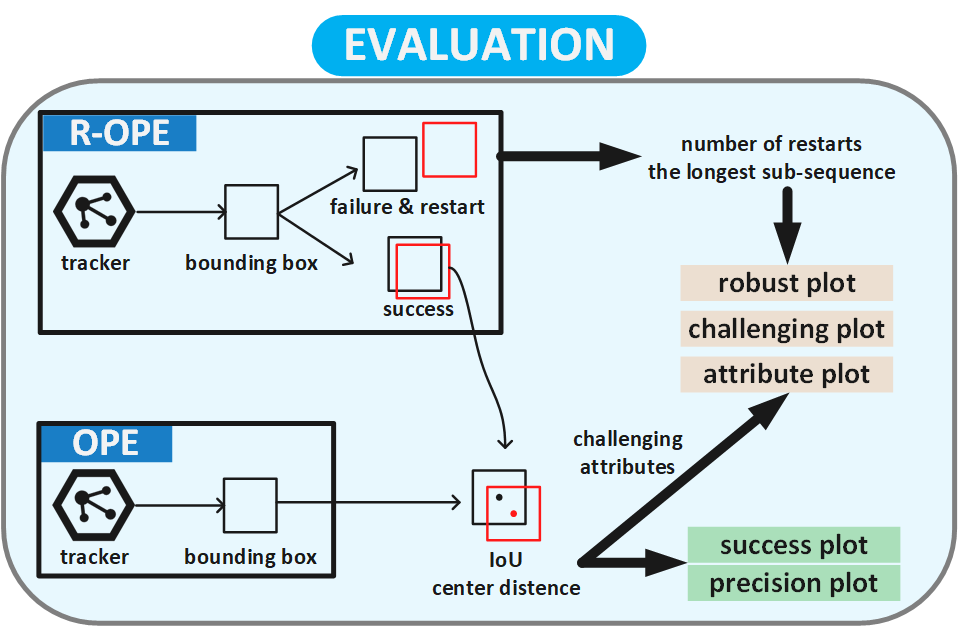

Mechanism: Initialize a tracker in the first frame and continuously record the tracking results is one-pass evaluation (OPE). To utilize the failure information and analysis the breakdown reasons, OTB benchmark offers a re-initialization mechanism (OPER). Most long-term tracking benchmarks select OPE mechanism as evaluation system. The VOTLT competition, which regards "target disappearance" as the manifestation of long-term tracking, hopes trackers can re-locate target and exclude the restart mechanism for long-term evaluation.

Indicator: Most evaluation indicators can be summarized from precision, successful rate, and robustness. Existing indicators select the positional relationship between predicted result $p_t$ and ground-truth $g_t$ in the $t$-th frame to accomplish calculation. Besides, several long-term tracking benchmarks require trackers to output disappearance-judgment for calculating the tracking accuracy, tracking recall and tracking F-measure.

Motivation of Optimizing Evaluation in SOTVerse

Existing evaluation mechanisms and performance indicators are fragmented. More importantly, the impact of challenging factors in the SOT task has long been identified, but ignored by existing mechanisms, which mainly focus on the all-around performance of complete sequences. Therefore, when constructing the SOTVerse, we first clarify the calculation formula of performance indicators, then conduct experiments on various tasks to explore the applicable scope of different evaluation methods.

Executor

Executors aims to process the task in environments. Most benchmarks are designed for algorithms, while VideoCube has extended the evaluation scope to support human experimenters. Thus, both algorithms and humans can be the executor in the SOT task.